What Everyone Should Know About AI's Environmental Impact

At some point in every engagement, we eventually get asked:

"Isn't AI bad for the environment?"

It's a fair question. And the short answer is, “Yes.” But the longer answer depends on what you count. What model? Whose data? Which stage of the lifecycle? Are you asking about a single prompt or the billions of them running worldwide every day? A lot of very smart people are studying this, and a year into serious public attention, the numbers still move around a lot depending on who's holding the calculator.

This is the familiar shape of most serious environmental problems: a lot of individually small decisions, made by a lot of different actors, aggregating into something enormous. A tragedy of the commons, where everyone's individual part looks genuinely tiny and the combined weight is still climate-altering. Recycling looks like this. Air travel looks like this. And now AI does, too.

We don't have a clean answer. But after a couple of years doing this work with clients, what we do have is a way to think about it honestly: what actually matters, who's carrying the responsibility, and what any of us can reasonably do about it.

Understanding the lifecycle of AI

When people ask about AI's environmental footprint, they're usually weaving four very different conversations together. It helps to separate them.

Hardware. A lot goes into building the hardware that powers AI models. A single NVIDIA HGX H100 baseboard ships with about 1,312 kg of CO₂ in embodied carbon before it's ever plugged in.

Training. Before a model is ever deployed, it must be trained. Training GPT-3 consumed about 1,287 MWh of electricity (enough to power roughly 120 U.S. households for a year) and evaporated around 700,000 liters of freshwater on-site.

Inference. The part you and your team actually touch. A 2025 study showed that a standard prompt to GPT-4o or Gemini uses roughly 0.3 Wh of energy and 3 mL of water. To put it in perspective, one prompt uses about the energy of watching TV for nine seconds. A full phone charge costs about the energy of 50 to 70 prompts. Growing one almond uses roughly the water of 25,000 prompts. Small individually. Enormous collectively.

End of life. AI GPUs live two to three years in production, not the five or six that accounting usually assumes, and the resulting e-waste is projected at 1.2 to 5 million metric tons per year by 2030.

Hardware, training, and end of life are decisions made by the companies building these models. Inference is where the rest of us come in.

Who's actually carrying the eco-responsibility

Model developers carry most of it. The companies training frontier models — OpenAI, Anthropic, Google, Meta, and the smaller labs — are making the environmental decisions with the biggest downstream consequences. Where to site data centers. What energy mix to run on. How often to retrain. How aggressively to refresh hardware. Whether to invest in closed-loop liquid cooling or evaporative cooling. These are the levers that actually move the needle. It's reasonable to expect more transparency and more accountability here. Some labs have started publishing their numbers so outside observers have visibility into their choices.

Organizations that deploy AI carry a real middle layer. Companies choosing which vendors to use, which models to license, and how their employees use AI day to day are making decisions at meaningful scale. An organization of 5,000 people running a reasoning model on every email uses drastically more energy than one where lightweight models handle routine work.

Individuals carry a small but nonzero part. UPS flies hundreds of thousands of miles a week; no single consumer choosing the train instead of a flight is going to register against that. The same arithmetic applies to AI: popular models are processing trillions of tokens a day, and your personal prompting habits are not going to flip that math.

Every environmental problem we take seriously works this way. While no individual action causes or reverses climate change, and no single vote wins an election, we all have a civic duty to act together in building the world we want. Individual behavior sets the norms that eventually shape what organizations and developers decide to do next.

Your part: six ways to lower your AI footprint

Most of these are easy, AND have the happy side effect of making you better at using AI. Good prompting is efficient prompting.

Match the tool to the task. Don't use a reasoning model for spell-check. Reasoning models (the ones that "think" before they answer) use 50 to 100 times the energy of a standard prompt. Save them for actual reasoning problems.

Be specific. Vague prompts generate long, expensive outputs. "Summarize this article in two sentences" is cheaper and better than "what does this article say?"

Set output constraints. "Keep it under 100 words." "Three bullet points." Shorter outputs cost less.

Use regular search for simple facts. A plain Google search is about 10 times more energy-efficient than a generative AI summary for "what's the capital of Portugal"-style questions.

Stop early when the answer drifts. If the model is heading somewhere you don't want, hit the stop button. Every word in a response has a cost. Don't pay for the ones you don't want.

Clean up unused AI content. Turn off auto-AI features you don't use. Cloud storage isn't free, and background features running while idle are a steady, invisible draw.

Your responsibility as an AI Leader

If you're a leader, you have levers that an individual doesn't.

The first lever is offset policies. Many companies already have policies for activities with high environmental costs — business travel being the most familiar example. These policies tend to encourage lower-emissions alternatives (virtual-first meeting policies, train-over-plane mandates, approval gates for flights) and offsetting emissions that still happen (internal carbon pricing, offsets, sustainability reporting).

The same idea can apply to AI. If your organization already has a policy and a practice for flights, extending it to cover AI use is a smaller leap than it looks. If your organization doesn't have one yet, AI may be a good reason to start.

The second lever is vendor selection. When you choose a model provider, you're choosing their data centers, energy mix, and disclosure standards. Ask vendors for data-center efficiency, renewable energy share, and whether they publish environmental numbers with AI specificity. If they can't answer, that's a signal. If enough buyers ask, disclosures start showing up.

A fair accounting

AI's environmental footprint is real. Most of it is decided by things that no single consumer can affect. The companies building these models carry the biggest share of the accountability, and it's fair to expect more from them. Organizations that deploy AI carry a meaningful middle layer, and leaders have specific ways to make their AI use more responsible. And the rest of us carry the smallest pieces individually, but in total amount to meaningful impact.

Like any environmental question worth taking seriously, the answer isn't individual guilt or systemic fatalism. It's all of us doing our part.

At some point in every engagement, we eventually get asked:

"Isn't AI bad for the environment?"

It's a fair question. And the short answer is, “Yes.” But the longer answer depends on what you count. What model? Whose data? Which stage of the lifecycle? Are you asking about a single prompt or the billions of them running worldwide every day? A lot of very smart people are studying this, and a year into serious public attention, the numbers still move around a lot depending on who's holding the calculator.

This is the familiar shape of most serious environmental problems: a lot of individually small decisions, made by a lot of different actors, aggregating into something enormous. A tragedy of the commons, where everyone's individual part looks genuinely tiny and the combined weight is still climate-altering. Recycling looks like this. Air travel looks like this. And now AI does, too.

We don't have a clean answer. But after a couple of years doing this work with clients, what we do have is a way to think about it honestly: what actually matters, who's carrying the responsibility, and what any of us can reasonably do about it.

Understanding the lifecycle of AI

When people ask about AI's environmental footprint, they're usually weaving four very different conversations together. It helps to separate them.

Hardware. A lot goes into building the hardware that powers AI models. A single NVIDIA HGX H100 baseboard ships with about 1,312 kg of CO₂ in embodied carbon before it's ever plugged in.

Training. Before a model is ever deployed, it must be trained. Training GPT-3 consumed about 1,287 MWh of electricity (enough to power roughly 120 U.S. households for a year) and evaporated around 700,000 liters of freshwater on-site.

Inference. The part you and your team actually touch. A 2025 study showed that a standard prompt to GPT-4o or Gemini uses roughly 0.3 Wh of energy and 3 mL of water. To put it in perspective, one prompt uses about the energy of watching TV for nine seconds. A full phone charge costs about the energy of 50 to 70 prompts. Growing one almond uses roughly the water of 25,000 prompts. Small individually. Enormous collectively.

End of life. AI GPUs live two to three years in production, not the five or six that accounting usually assumes, and the resulting e-waste is projected at 1.2 to 5 million metric tons per year by 2030.

Hardware, training, and end of life are decisions made by the companies building these models. Inference is where the rest of us come in.

Who's actually carrying the eco-responsibility

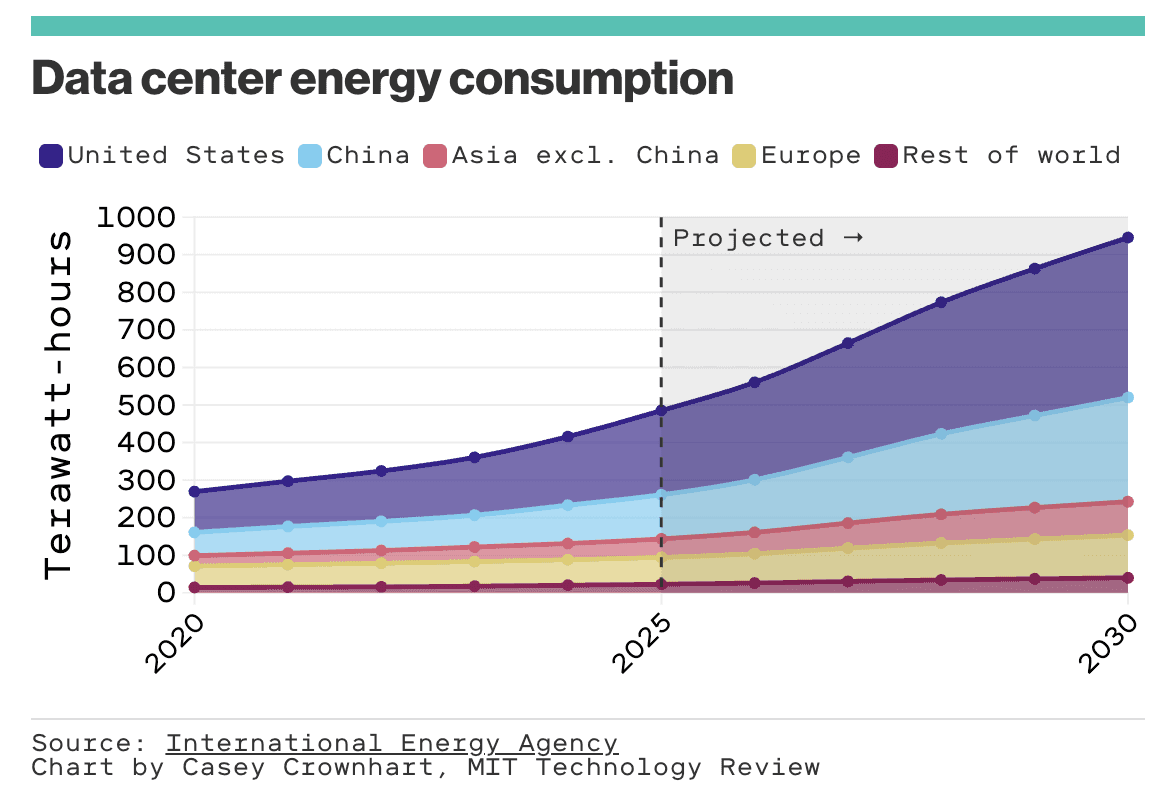

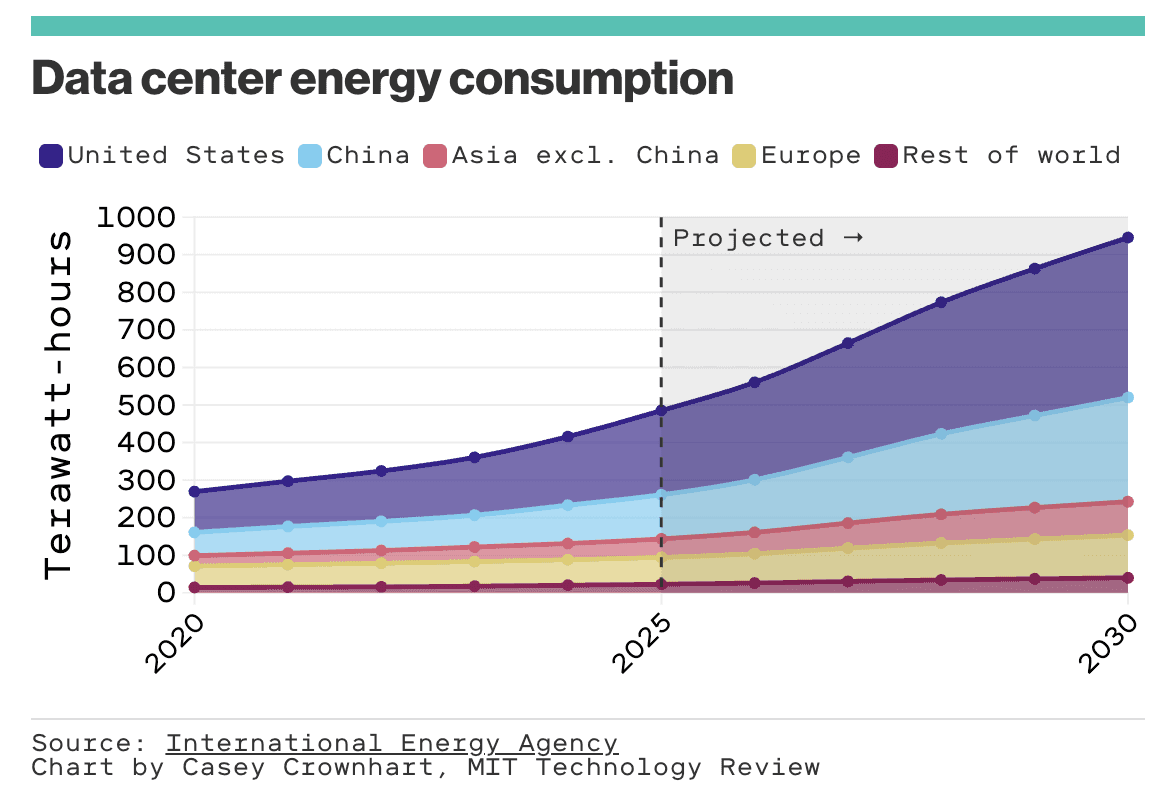

Model developers carry most of it. The companies training frontier models — OpenAI, Anthropic, Google, Meta, and the smaller labs — are making the environmental decisions with the biggest downstream consequences. Where to site data centers. What energy mix to run on. How often to retrain. How aggressively to refresh hardware. Whether to invest in closed-loop liquid cooling or evaporative cooling. These are the levers that actually move the needle. It's reasonable to expect more transparency and more accountability here. Some labs have started publishing their numbers so outside observers have visibility into their choices.

Organizations that deploy AI carry a real middle layer. Companies choosing which vendors to use, which models to license, and how their employees use AI day to day are making decisions at meaningful scale. An organization of 5,000 people running a reasoning model on every email uses drastically more energy than one where lightweight models handle routine work.

Individuals carry a small but nonzero part. UPS flies hundreds of thousands of miles a week; no single consumer choosing the train instead of a flight is going to register against that. The same arithmetic applies to AI: popular models are processing trillions of tokens a day, and your personal prompting habits are not going to flip that math.

Every environmental problem we take seriously works this way. While no individual action causes or reverses climate change, and no single vote wins an election, we all have a civic duty to act together in building the world we want. Individual behavior sets the norms that eventually shape what organizations and developers decide to do next.

Your part: six ways to lower your AI footprint

Most of these are easy, AND have the happy side effect of making you better at using AI. Good prompting is efficient prompting.

Match the tool to the task. Don't use a reasoning model for spell-check. Reasoning models (the ones that "think" before they answer) use 50 to 100 times the energy of a standard prompt. Save them for actual reasoning problems.

Be specific. Vague prompts generate long, expensive outputs. "Summarize this article in two sentences" is cheaper and better than "what does this article say?"

Set output constraints. "Keep it under 100 words." "Three bullet points." Shorter outputs cost less.

Use regular search for simple facts. A plain Google search is about 10 times more energy-efficient than a generative AI summary for "what's the capital of Portugal"-style questions.

Stop early when the answer drifts. If the model is heading somewhere you don't want, hit the stop button. Every word in a response has a cost. Don't pay for the ones you don't want.

Clean up unused AI content. Turn off auto-AI features you don't use. Cloud storage isn't free, and background features running while idle are a steady, invisible draw.

Your responsibility as an AI Leader

If you're a leader, you have levers that an individual doesn't.

The first lever is offset policies. Many companies already have policies for activities with high environmental costs — business travel being the most familiar example. These policies tend to encourage lower-emissions alternatives (virtual-first meeting policies, train-over-plane mandates, approval gates for flights) and offsetting emissions that still happen (internal carbon pricing, offsets, sustainability reporting).

The same idea can apply to AI. If your organization already has a policy and a practice for flights, extending it to cover AI use is a smaller leap than it looks. If your organization doesn't have one yet, AI may be a good reason to start.

The second lever is vendor selection. When you choose a model provider, you're choosing their data centers, energy mix, and disclosure standards. Ask vendors for data-center efficiency, renewable energy share, and whether they publish environmental numbers with AI specificity. If they can't answer, that's a signal. If enough buyers ask, disclosures start showing up.

A fair accounting

AI's environmental footprint is real. Most of it is decided by things that no single consumer can affect. The companies building these models carry the biggest share of the accountability, and it's fair to expect more from them. Organizations that deploy AI carry a meaningful middle layer, and leaders have specific ways to make their AI use more responsible. And the rest of us carry the smallest pieces individually, but in total amount to meaningful impact.

Like any environmental question worth taking seriously, the answer isn't individual guilt or systemic fatalism. It's all of us doing our part.