When Building Is Cheap, Problem Definition Is Everything

Last week in Pittsburgh, the Aspen Institute, the policy non-profit, brought a group of state and county benefits administrators together for three days to compare notes on the challenges of delivering benefits at scale.

We were there to turn their operational problems into prototypes of solutions. It was a 36-hour sprint: We brainstormed Monday night, built Tuesday, and demoed Wednesday morning.

The instinct in a rapid prototyping sprint like this is to start building immediately. 36 hours isn't much time, and the pressure to show something by Wednesday is real. We took a different approach. Across the 36 hours, we spent two-thirds of our time defining the problems and solutions before we started building.

When building was the slow, expensive part of the process, that allocation would have seemed irresponsible. Even just a few years ago, you front-loaded just enough definition to unblock engineers and let them get to work. AI changed the math. Prototypes are cheap to spin up, which means the focus in a three-day sprint should move from the building to the thinking.

Here are three things that made our sprint work.

Get People Outside Their Own Constraints

Experts internalize their constraints so deeply they stop seeing them as constraints. Ask a benefits administrator what's broken in their call center and you'll get a list filtered through every legacy system and procurement rule they've ever fought.

It is often hard for people to imagine a creative solution to their own problems. The more "expert" you are in the issues you encounter, the more you're likely to have internalized all of the little pieces that make solving the problem impossible.

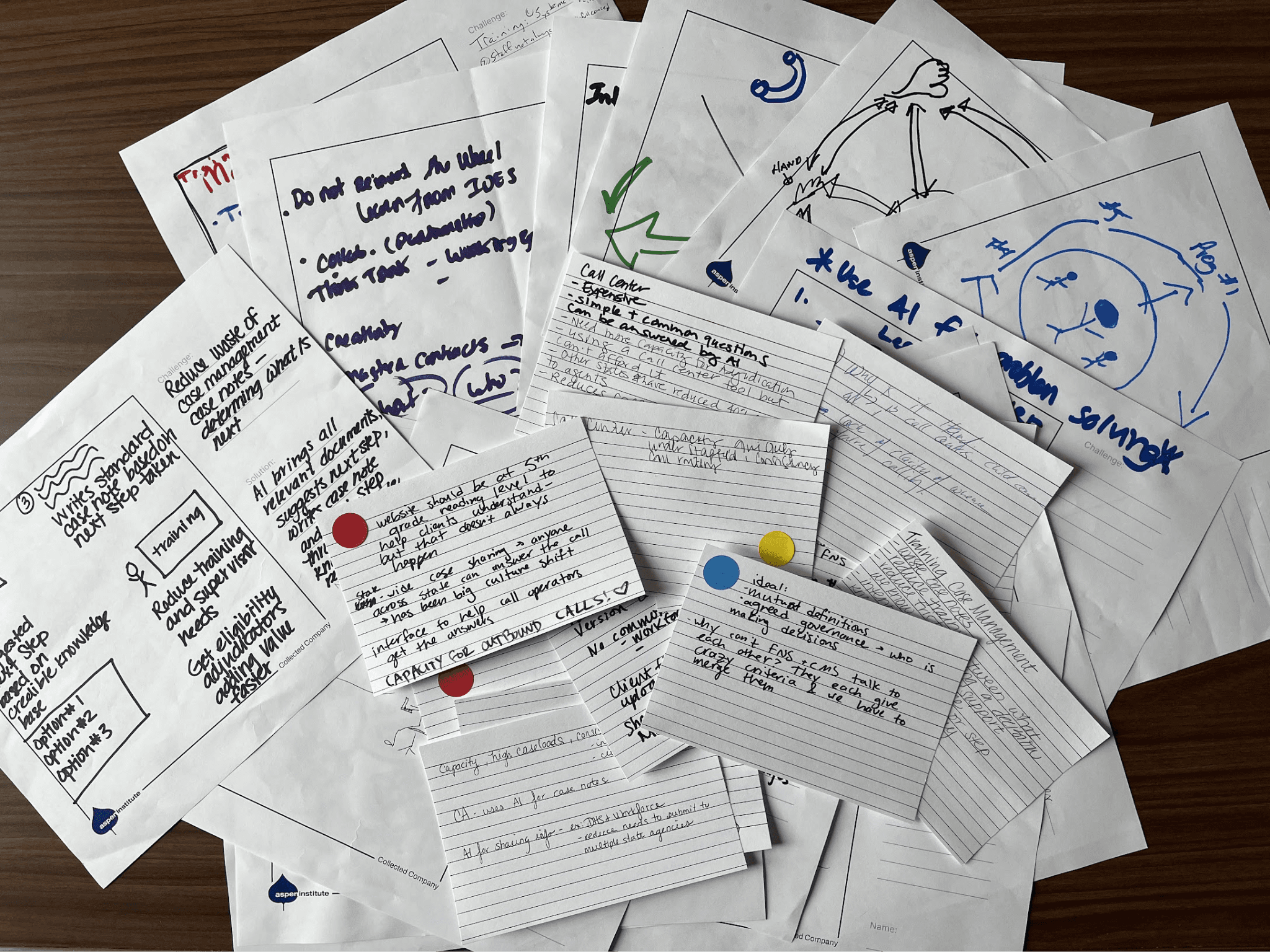

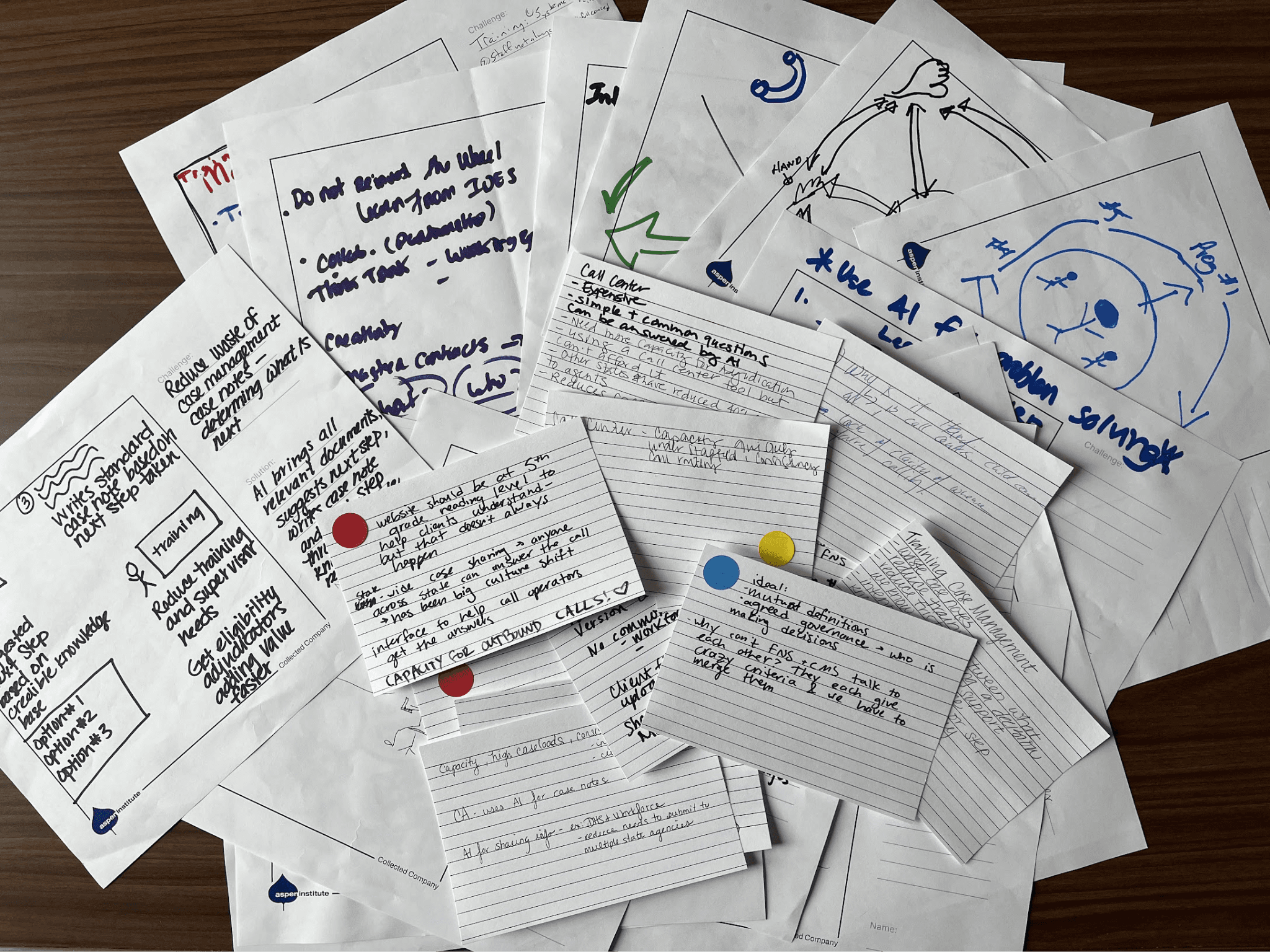

To overcome this, we started with The Reporter exercise. We gave each administrator three colored dots—red for call center operations, blue for document management, yellow for training and case management—and asked them to pick the area where they felt the most pain.

Their first reaction was telling: "I can only pick one?" These are people who run agencies and departments. Every category is on fire at once. Forcing a choice was itself a small act of definition.

Then we paired people with differently-colored dots and asked them to interview each other. Questions like, What is your top challenge in that area? Why is it hard? What have you or your team tried?

The second exercise pushed further. We asked each pair to draw a solution to their partner's problem, with explicit permission to ignore budget, legacy systems, and the procurement calendar.

The sketches were rough, and that turned out to be useful. When we brought the group together to review, people filled in the ambiguity with their own imagination. A vague box became whatever the viewer needed it to be.

By the end of Monday evening, we had a set of problem statements that no one in the room would have produced about their own work.

Put the Domain Expert at the Table

Early Tuesday, I downloaded a PDF of what I thought was the Social Security Tax Act. It was the Social Security Tax Act, but the 1935 original, ninety years and countless amendments out of date. I couldn't have told you the difference. Our subject-matter expert, Ayushi Roy, could, and did, before I built our prototype around it.

Ayushi did more than catch errors. She helped us identify which datasets we needed, walked us through the relationship between federal legislation, state legislation, and state policy, and explained which documents were authoritative for which questions. When the administrators shared their sketches on Monday, she helped us identify the patterns and categorize them into workflows.

The old pattern was to interview the SME at the start, build, then bring them back to review. That pattern existed because builds were long and SME time was expensive. With AI in the loop, the build is short enough that an SME can stay in the room for the whole thing, which changes what they're able to contribute. Instead of validating finished work, they shape what gets made.

Use Real Data From Hour One

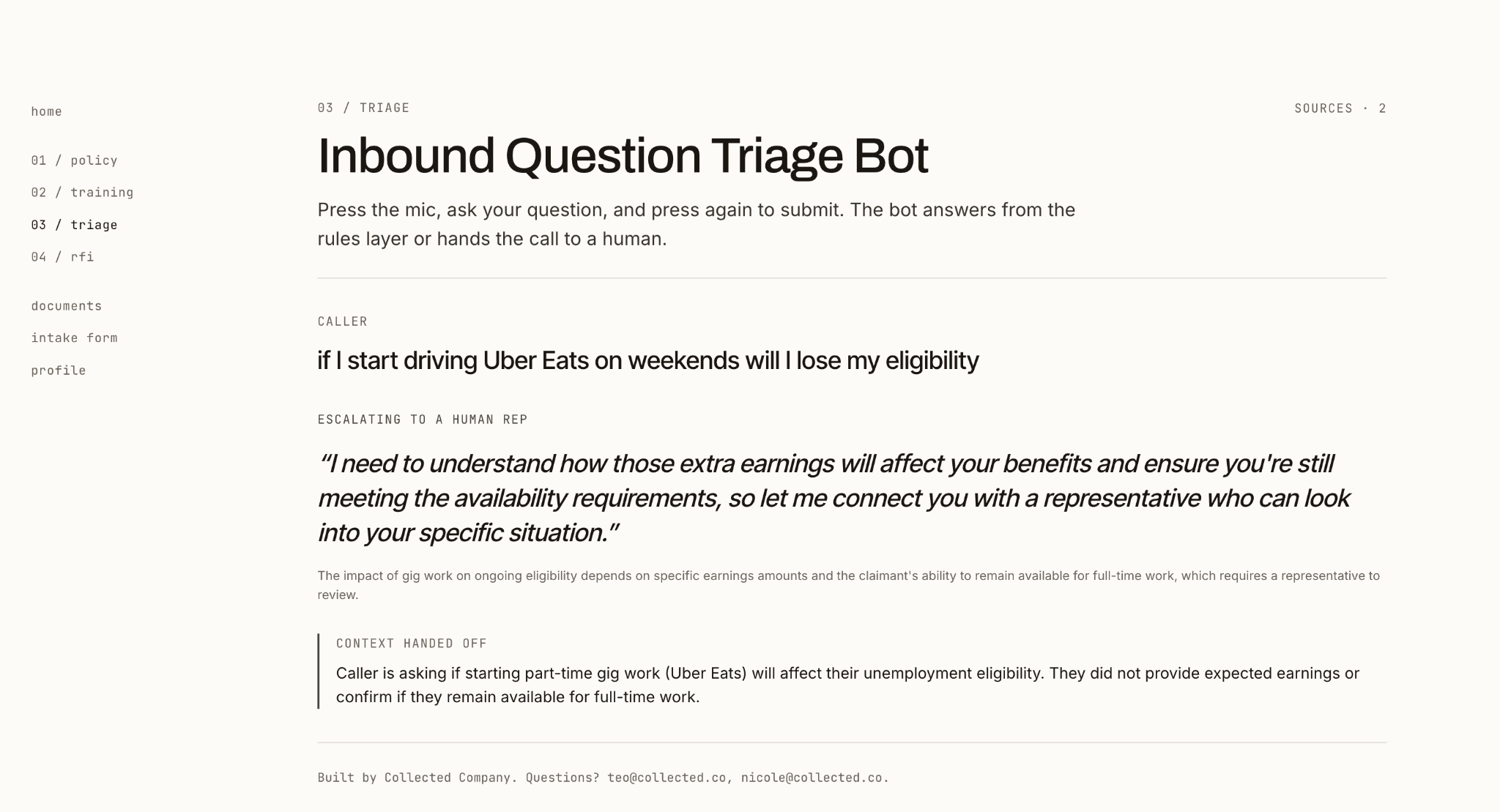

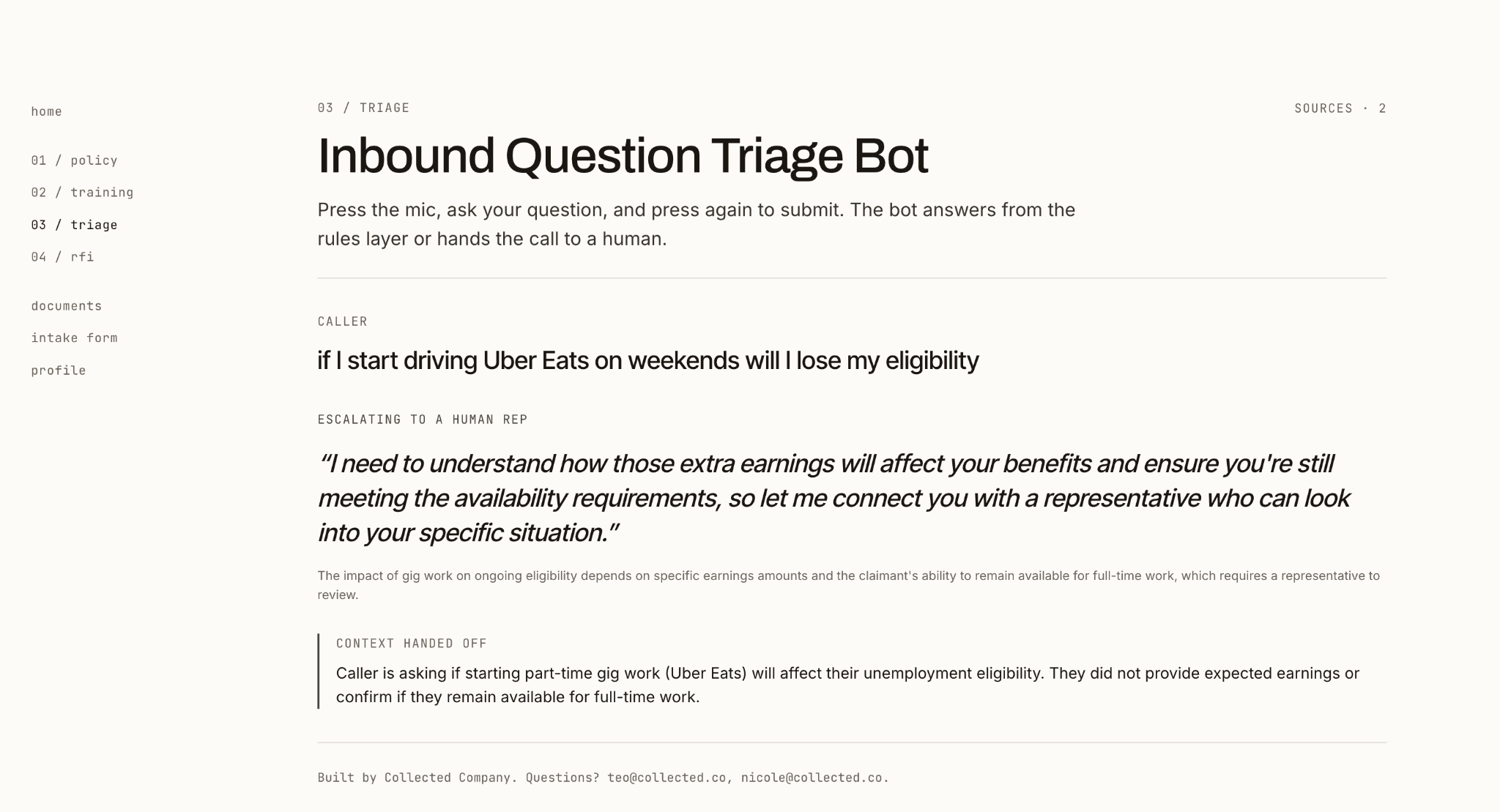

We built 4 four prototypes. All of them required real data. One was a case management assistant that parsed an application form and flagged what additional questions the case manager needed to ask. To make that happen, you need a real application form. Two others—a call center training tool and a call center bot—depended on real policy language.

That meant we needed a state that had published all those documents in usable form. We spent time looking at Arizona, Texas, and Florida, but none of them had everything we needed. Then we found Oregon, which had published the unemployment application, eligibility policy, and relevant legislation available online as PDFs. I spent part of Tuesday filling out the Oregon application as if I were applying for benefits, and we pulled requirements directly from the state's policy documents. Every prototype was built on language a real claimant would actually encounter.

The Demo

The demos went well. We walked through each prototype up on the big screen. I role-played a call with our training bot, trying to answer questions about whether driving for Uber Eats would affect the bot’s eligibility for unemployment insurance. My answers were bad enough that the room laughed. Then we let the administrators break into groups, pull the prototype up on their phones, and explore the demos on their own.

That's the moment the previous three days paid off. The administrators were debating whether the eligibility logic was right and telling us how they'd want to see this rolled out across their field offices. That kind of specificity only comes from building against a real problem the room recognizes.

What Changes When Building Gets Cheap

Now that AI has made building fast, we can spend more time on the crucial, strategic, creative part: defining the problem and designing the solution. And that’s true whether your development cycle is 36 hours or 3-to-6 months.

Last week in Pittsburgh, the Aspen Institute, the policy non-profit, brought a group of state and county benefits administrators together for three days to compare notes on the challenges of delivering benefits at scale.

We were there to turn their operational problems into prototypes of solutions. It was a 36-hour sprint: We brainstormed Monday night, built Tuesday, and demoed Wednesday morning.

The instinct in a rapid prototyping sprint like this is to start building immediately. 36 hours isn't much time, and the pressure to show something by Wednesday is real. We took a different approach. Across the 36 hours, we spent two-thirds of our time defining the problems and solutions before we started building.

When building was the slow, expensive part of the process, that allocation would have seemed irresponsible. Even just a few years ago, you front-loaded just enough definition to unblock engineers and let them get to work. AI changed the math. Prototypes are cheap to spin up, which means the focus in a three-day sprint should move from the building to the thinking.

Here are three things that made our sprint work.

Get People Outside Their Own Constraints

Experts internalize their constraints so deeply they stop seeing them as constraints. Ask a benefits administrator what's broken in their call center and you'll get a list filtered through every legacy system and procurement rule they've ever fought.

It is often hard for people to imagine a creative solution to their own problems. The more "expert" you are in the issues you encounter, the more you're likely to have internalized all of the little pieces that make solving the problem impossible.

To overcome this, we started with The Reporter exercise. We gave each administrator three colored dots—red for call center operations, blue for document management, yellow for training and case management—and asked them to pick the area where they felt the most pain.

Their first reaction was telling: "I can only pick one?" These are people who run agencies and departments. Every category is on fire at once. Forcing a choice was itself a small act of definition.

Then we paired people with differently-colored dots and asked them to interview each other. Questions like, What is your top challenge in that area? Why is it hard? What have you or your team tried?

The second exercise pushed further. We asked each pair to draw a solution to their partner's problem, with explicit permission to ignore budget, legacy systems, and the procurement calendar.

The sketches were rough, and that turned out to be useful. When we brought the group together to review, people filled in the ambiguity with their own imagination. A vague box became whatever the viewer needed it to be.

By the end of Monday evening, we had a set of problem statements that no one in the room would have produced about their own work.

Put the Domain Expert at the Table

Early Tuesday, I downloaded a PDF of what I thought was the Social Security Tax Act. It was the Social Security Tax Act, but the 1935 original, ninety years and countless amendments out of date. I couldn't have told you the difference. Our subject-matter expert, Ayushi Roy, could, and did, before I built our prototype around it.

Ayushi did more than catch errors. She helped us identify which datasets we needed, walked us through the relationship between federal legislation, state legislation, and state policy, and explained which documents were authoritative for which questions. When the administrators shared their sketches on Monday, she helped us identify the patterns and categorize them into workflows.

The old pattern was to interview the SME at the start, build, then bring them back to review. That pattern existed because builds were long and SME time was expensive. With AI in the loop, the build is short enough that an SME can stay in the room for the whole thing, which changes what they're able to contribute. Instead of validating finished work, they shape what gets made.

Use Real Data From Hour One

We built 4 four prototypes. All of them required real data. One was a case management assistant that parsed an application form and flagged what additional questions the case manager needed to ask. To make that happen, you need a real application form. Two others—a call center training tool and a call center bot—depended on real policy language.

That meant we needed a state that had published all those documents in usable form. We spent time looking at Arizona, Texas, and Florida, but none of them had everything we needed. Then we found Oregon, which had published the unemployment application, eligibility policy, and relevant legislation available online as PDFs. I spent part of Tuesday filling out the Oregon application as if I were applying for benefits, and we pulled requirements directly from the state's policy documents. Every prototype was built on language a real claimant would actually encounter.

The Demo

The demos went well. We walked through each prototype up on the big screen. I role-played a call with our training bot, trying to answer questions about whether driving for Uber Eats would affect the bot’s eligibility for unemployment insurance. My answers were bad enough that the room laughed. Then we let the administrators break into groups, pull the prototype up on their phones, and explore the demos on their own.

That's the moment the previous three days paid off. The administrators were debating whether the eligibility logic was right and telling us how they'd want to see this rolled out across their field offices. That kind of specificity only comes from building against a real problem the room recognizes.

What Changes When Building Gets Cheap

Now that AI has made building fast, we can spend more time on the crucial, strategic, creative part: defining the problem and designing the solution. And that’s true whether your development cycle is 36 hours or 3-to-6 months.